Background

NeRF learns a continuous volumetric representation of a scene from posed images, enabling novel view synthesis. In the few-shot regime (3–10 views), NeRF suffers from underdetermination: there are not enough observations to constrain the MLP. A natural idea is to condition NeRF on rich semantic features from foundation models like DINO (a self-supervised ViT), which can provide scene priors.

This paper asks: does DINO actually help when views are extremely scarce?

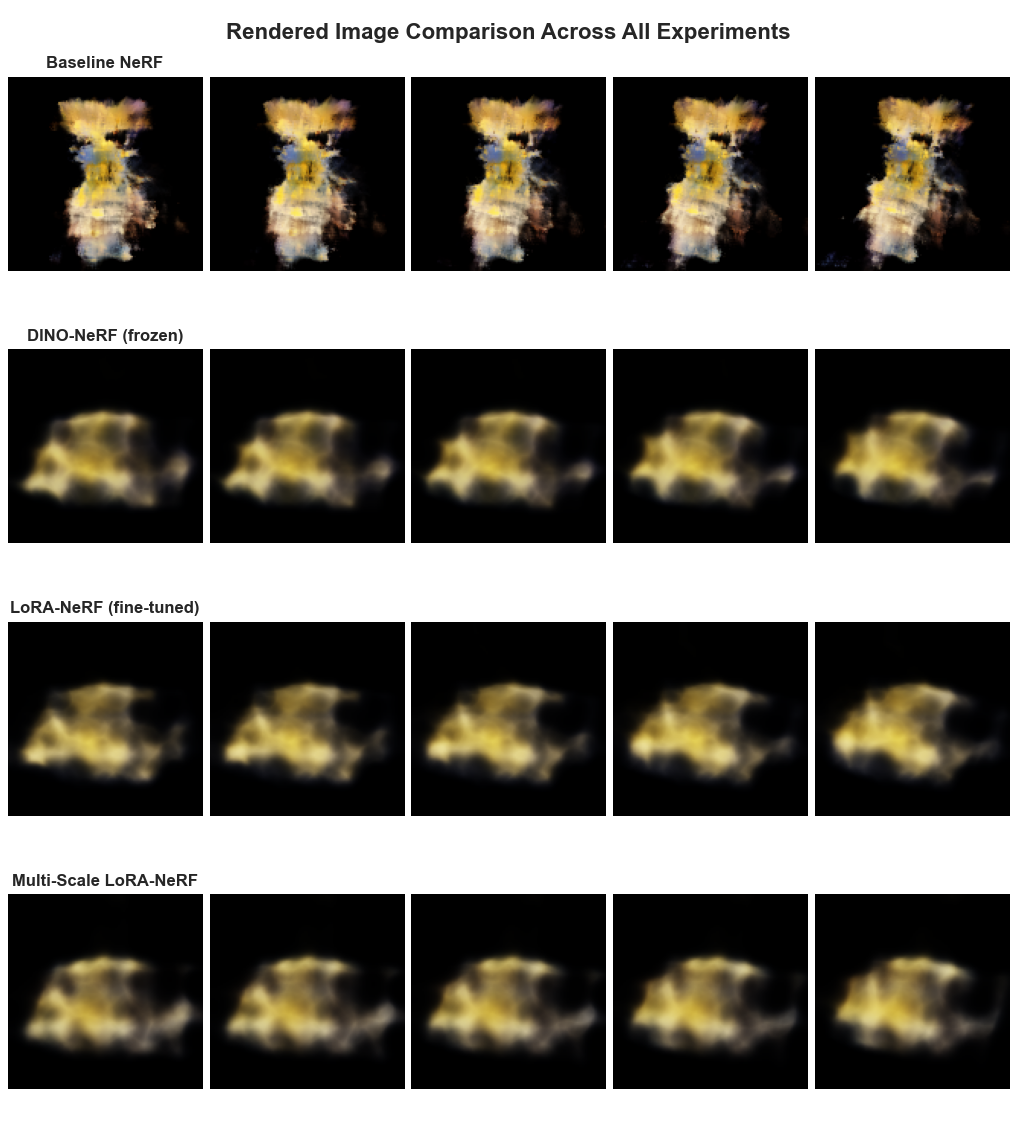

Models Evaluated

- Baseline NeRF: standard MLP + positional encoding + volume rendering, no external features

- DINO-NeRF (frozen): DINO patch features concatenated to NeRF input, weights frozen

- LoRA-NeRF: DINO features with Low-Rank Adaptation (LoRA) fine-tuning on few-shot views

- Multi-Scale LoRA-NeRF: multi-scale DINO feature pyramid fused with LoRA adaptation

Results

| Model | PSNR ↑ | SSIM ↑ | LPIPS ↓ |

|---|---|---|---|

| Baseline NeRF | 14.71 | 0.46 | 0.53 |

| DINO-NeRF (frozen) | 12.99 (−1.72) | 0.46 | 0.54 |

| LoRA-NeRF (fine-tuned) | 12.97 (−1.74) | 0.45 | 0.54 |

| Multi-Scale LoRA-NeRF | 12.94 (−1.77) | 0.44 | 0.54 |

Evaluated on the Blender Lego scene with extreme few-shot setup. Green = best. Red = worse than baseline.

Why Does DINO Hurt?

We hypothesize several causes for the degradation:

- Domain mismatch: DINO is trained on natural images; few-shot NeRF targets (e.g., Blender synthetic objects) have very different appearance statistics.

- Feature rigidity: Frozen DINO features encode ImageNet-level semantics, not the fine-grained 3D geometry NeRF needs to infer.

- Overfitting under LoRA: With only a handful of views, LoRA fine-tuning may overfit DINO features to specific view directions, harming generalization.

- Feature-geometry conflict: DINO features are 2D patch-level; conditioning a 3D volumetric MLP on them introduces an inductive bias mismatch.

Implications

Simpler, geometry-focused architectures may be more effective than feature-rich models for extreme few-shot 3D reconstruction. Future work should focus on geometric consistency losses, depth priors, and view-consistent regularization rather than semantic feature injection.

Citation

@article{sanjyal2024nerflimitations,

title = {Limitations of {NeRF} with Pre-trained Vision Features for Few-Shot 3D Reconstruction},

author = {Sanjyal, Ankit},

journal = {arXiv preprint arXiv:2506.18208},

year = {2025}

}